Why does musl make my Rust code so slow?

May 5, 2020

TL;DR: Stop using musl and alpine for smaller docker images!

During some recent benchmarking work of the Ballista Distributed Compute project, I discovered that the Rust benchmarks were ridiculously slow. After some brief debugging, it turns out that this was due to the use of musl, and this blog post was originally asking for help with the issue, but now provides some solutions.

My benchmark is packaged in Docker and I had used musl to produce a statically linked executable which was then copied into an alpine image, resulting in a small docker image. I did this because it is an approach that I have seen suggested in many blog posts.

Here is the original Dockerfile:

# Base image extends rust:nightly which extends debian:buster-slim

FROM ballistacompute/rust-cached-deps:0.2.3 as build

# Compile Ballista

RUN rm -rf /tmp/ballista/src/

COPY rust/Cargo.* /tmp/ballista/

COPY rust/build.rs /tmp/ballista/

COPY rust/src/ /tmp/ballista/src/

COPY proto/ballista.proto /tmp/ballista/proto/

# workaround for Arrow 0.17.0 build issue

COPY rust/format/Flight.proto /format

RUN cargo build --release --target x86_64-unknown-linux-musl

## Copy the statically-linked binary into a scratch container.

FROM alpine:3.10

# Install Tini for better signal handling

RUN apk add --no-cache tini

ENTRYPOINT ["/sbin/tini", "--"]

COPY --from=build /tmp/ballista/target/x86_64-unknown-linux-musl/release/executor /

USER 1000

ENV RUST_LOG=info

ENV RUST_BACKTRACE=1

CMD ["/executor"]

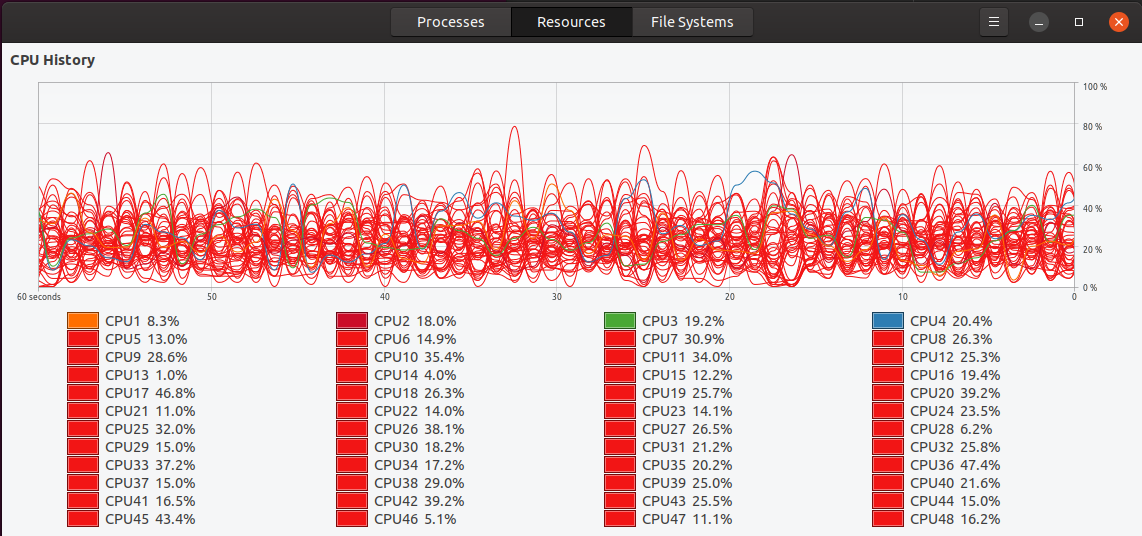

I ran some multi-threaded benchmarks on a 24-core desktop using the command docker run --cpus=12 to allocate 12 cores and I expected the benchmark to take 5-6 seconds based on the performance I saw when running natively on my desktop. Instead, the benchmark seemed to run forever (it turned out that it took around 30x longer than expected) and system monitor showed many threads using between 20% and 40% CPU during this time.

Removing musl

Suspecting that musl was the issue, I removed it from the Dockerfile, along with alpine, and just used the following instructions to run the release build.

RUN cargo build --release

ENTRYPOINT ["target/release/ballista-benchmarks"]

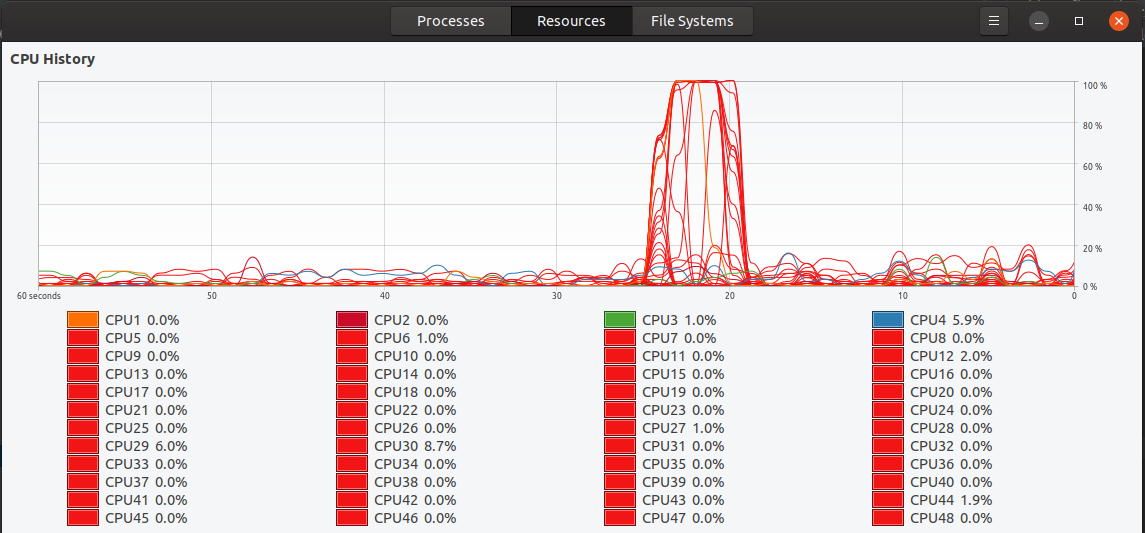

With this change, my benchmark performed as expected, and system monitor showed some threads using 100% CPU for a short period of time. Great news, but now I’m back to a multi-gigabyte Docker image, which is not practical.

Possible solutions

A widely suggested solution was to switch to the jemalloc allocator when using musl. Ripgrep suffered from a very similar issue which was resolved by switching to jemalloc when compiled with musl on 64-bit platforms. I did try this out but my code started failing with segmentation faults and I’m not sure why. It is possibly due to unsafe code in one of my dependencies but will take a while to figure out.

Also, some people suggested that the performance problems in musl go deeper than just the jemalloc issue, and that there are fundamental issues with threading in musl, potentially making it unsuitable for my use case.

Given these concerns over musl, I decided to go with a different solution. It turns out that I could use the same multi-stage Dockerfile approach but use debian:buster-slim as the new base image instead of alpine, and this no longer requires the use of musl (because the code was compiled in debian:buster-slim , because that is the ultimate base image).

Here is the new Dockerfile.

# Base image extends rust:nightly which extends debian:buster-slim

FROM ballistacompute/rust-cached-deps:0.2.3 as build

# Compile Ballista

RUN rm -rf /tmp/ballista/src/

COPY rust/Cargo.* /tmp/ballista/

COPY rust/build.rs /tmp/ballista/

COPY rust/src/ /tmp/ballista/src/

COPY proto/ballista.proto /tmp/ballista/proto/

# workaround for Arrow 0.17.0 build issue

COPY rust/format/Flight.proto /format

RUN cargo build --release

# Copy the binary into a new container for a smaller docker image

FROM debian:buster-slim

COPY --from=build /tmp/ballista/target/release/executor /

USER root

ENV RUST_LOG=info

ENV RUST_BACKTRACE=full

CMD ["/executor"]

With this change, I now have an 89 MB Docker image, and the performance I was expecting. Yay!

Thanks again to everyone who contributed to the discussions on /r/rust or Hacker News to help me resolve this issue!